@o_lampe said in Spirograph emulator with Duet2:

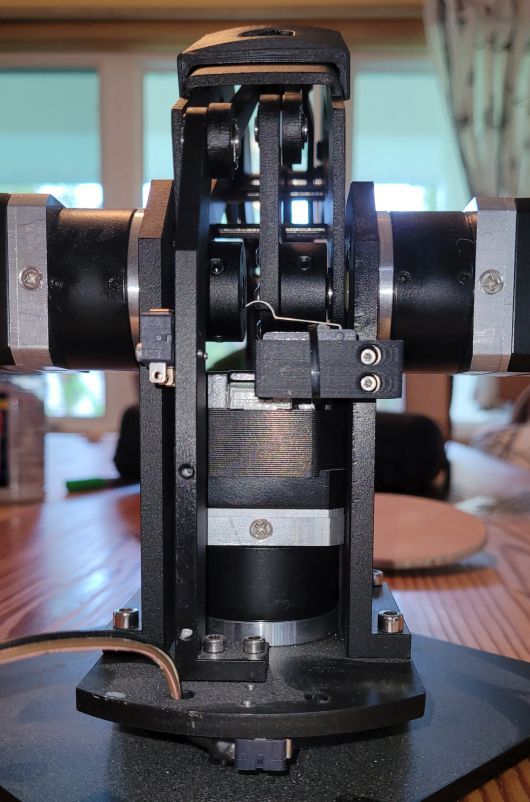

Would it be possible to add a rotary tool to a CoreXY frame?

The current RRF firmware calculates tools as being vertical, non rotating, non moving, describable with fixed XYZ offets (mesh compensation, baby stepping, tool changer depend on this linear offsets, e.g.).

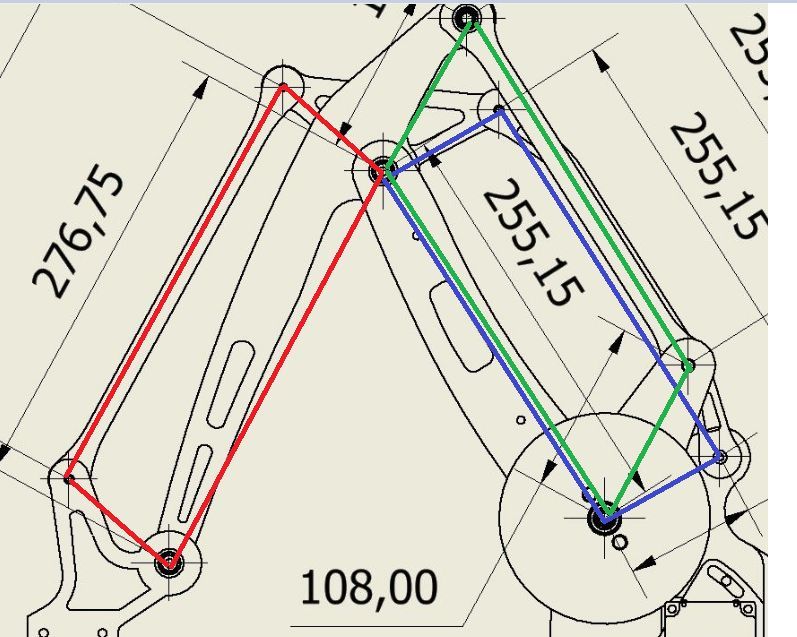

To avoid this limitations, the tool itself can be divided into a part like a moving/rotating axis and a fixed endpoint which can be dimension zero. With robotic kinematics, you simply add this additional axis to the chain. Forward kinematics is easy to calculate, but inverse kinematics (calculation from world coorinates back to motor positions) can be complicated. It depends on the implementation and axis positions whether it's complicated or not.

If you design your tool as a spheric design, so that some axes cross in one point with the axes before, the inverse calculation is easier. When you have a prototype and description, we can solve it together.